Official Challenge (Army of the Czech Republic)

Support for Military Operations in Urban Environments

How can modern technologies contribute to better awareness in urbanized spaces, ensure communication, and increase situational awareness during operations in complex urban environments?

The Problem We Address

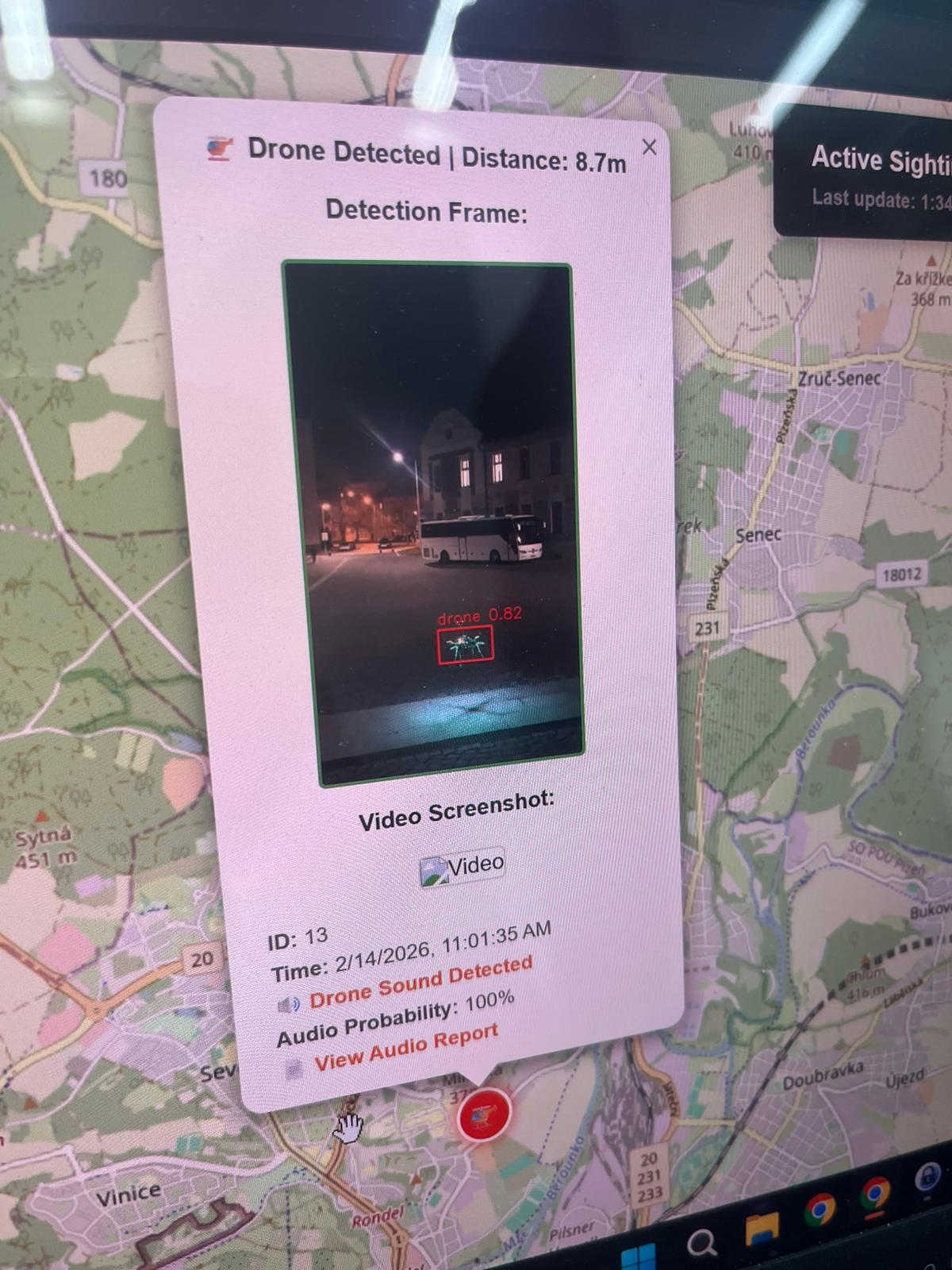

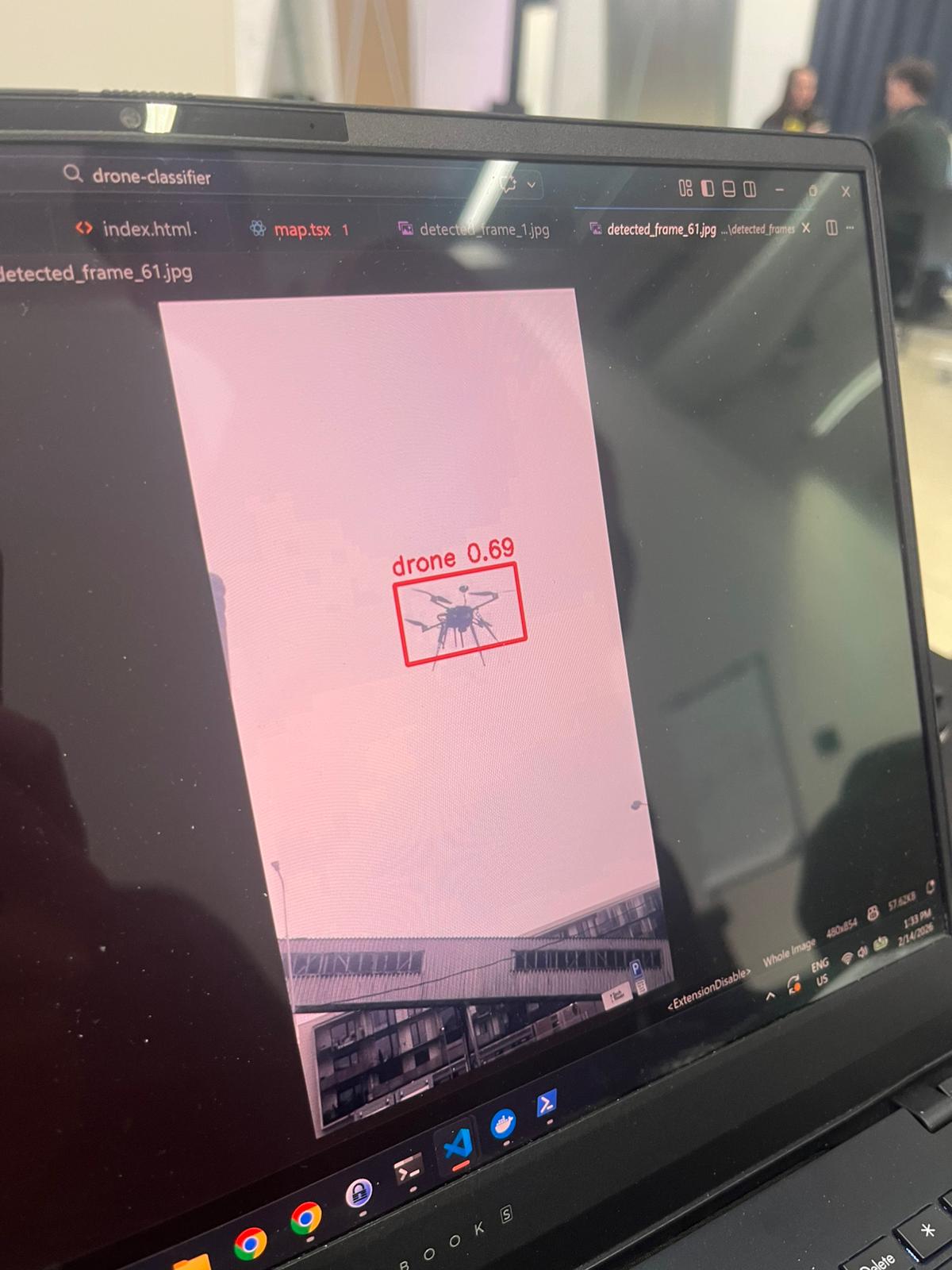

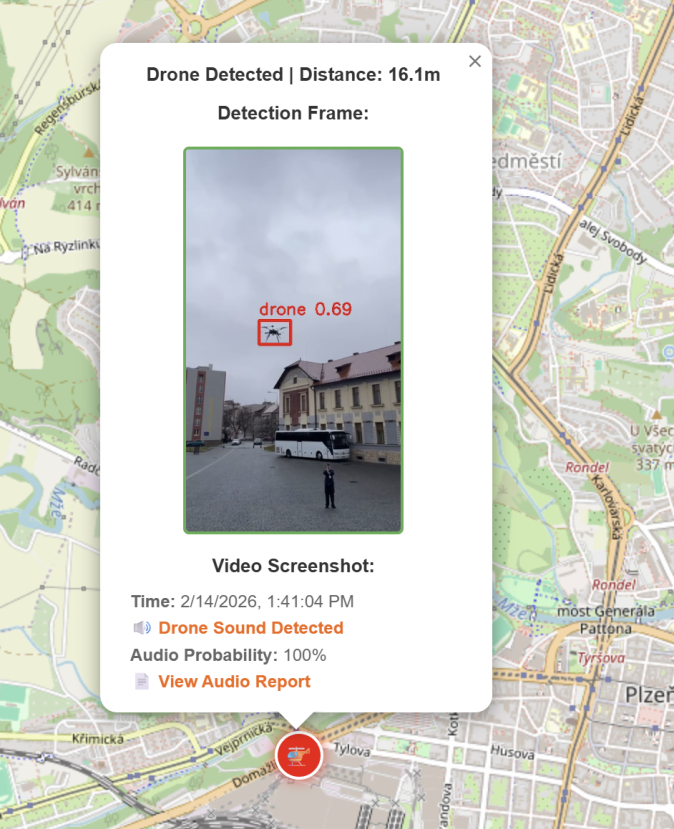

In urban environments, it is increasingly difficult to quickly assess what is flying overhead. Drone Classifier collects community sightings in two ways: actively (uploaded drone video) and passively (a microphone captures drone audio). The data is sent to a central server for automated analysis.

Outputs and Value

Every sighting produces a practical record: timestamp, location, classification, and supporting media. The map then shows movement patterns and trends over time. For everyday users, it provides a practical safety overview; for professional teams (e.g., crisis management or security analytics), it provides faster situational awareness for decision-making.

Why This Matters (Benefits)

The core benefit is turning fragmented observations into a shared intelligence layer. Citizens become better informed about what is happening above them and can make safer, better decisions in real time.

- People are more informed about local aerial activity and potential risk context.

- Even people who cannot physically fight can still contribute through non-combat urban warfare intelligence inputs.

- For the state, this creates a major tactical advantage by opening a new large-scale information source for analysis.

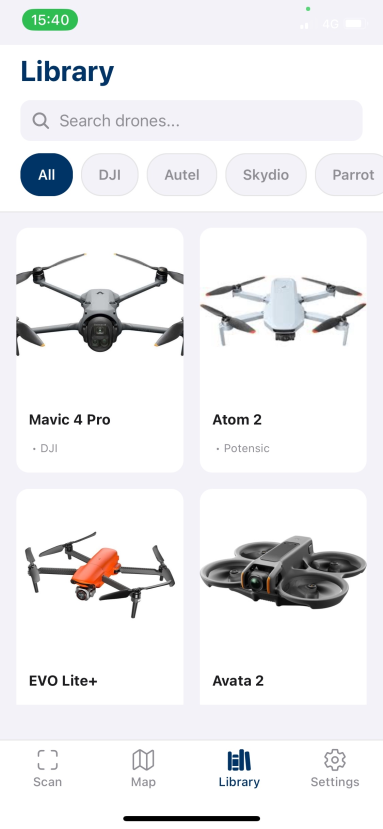

Collected Drone Library

We also maintain a visual library of collected drone sightings, helping users compare detections, inspect patterns, and better understand what has already been observed in the field.

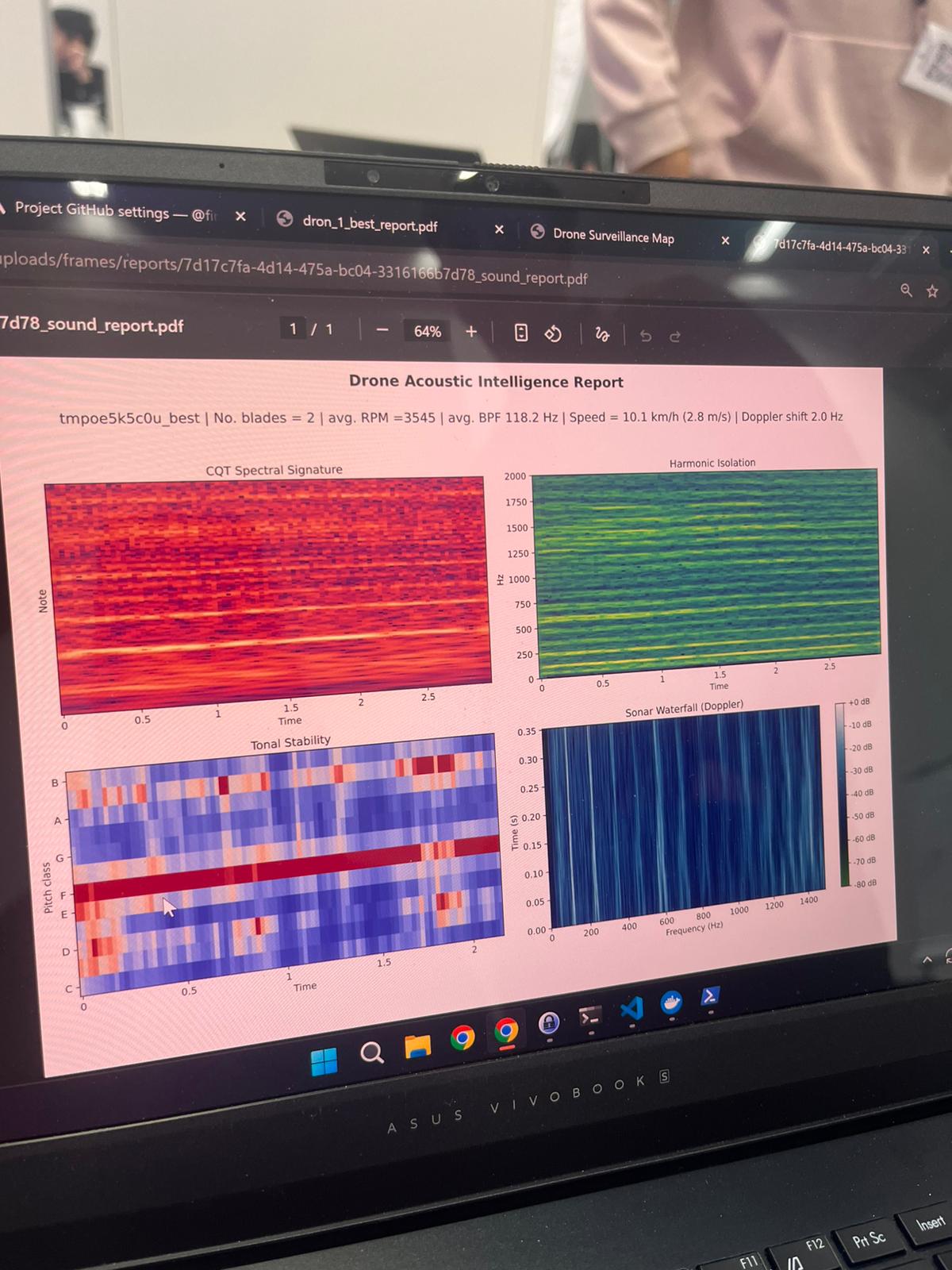

Acoustic Analysis

We designed an advanced acoustic layer that goes beyond simple "drone / no-drone" detection. From the frequency structure and harmonic patterns, we can estimate rotor count, infer the drone's weight category, and provide an approximate manufacturer match. We also estimate propeller RPM and approximate flight speed using Doppler-shift behavior. On top of that, we generate a specific drone fingerprint from its frequency imprint, which helps with matching repeated sightings of the same or similar platforms over time.

Gamification and Next Direction

The app is also designed as a "Pokémon GO for drone spotting" concept, where users are motivated to collect, validate, and improve sightings. Over time, this creates a more robust dataset for more accurate models and better real-world coverage.